Software Machine Tools

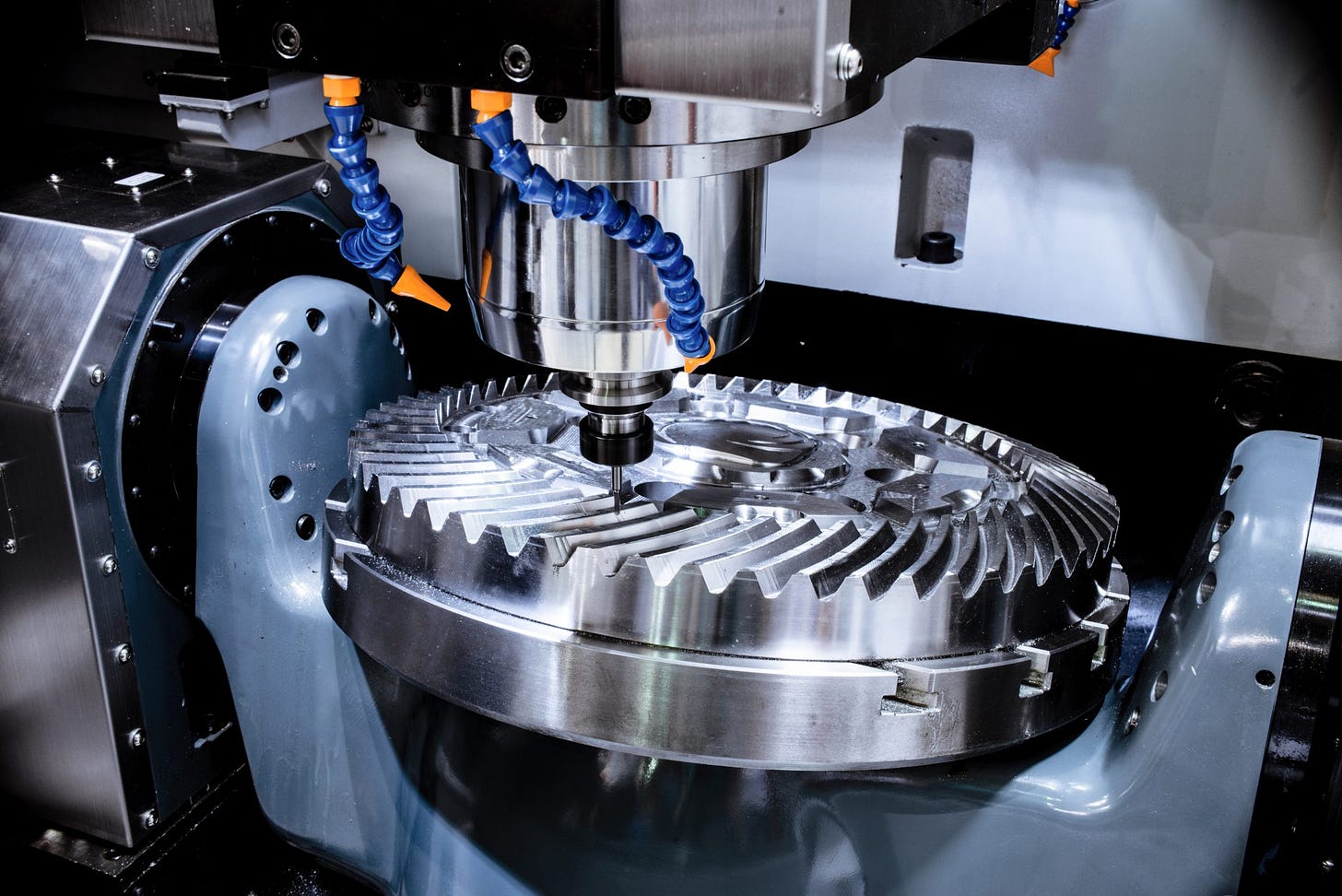

Manufacturing is shaped by machine tools – the machines that make the machines. There is a wide variety of such tools, with a wide variety of tradeoffs and limitations, and there are no real universal tools.

If you want high-volume, cheap manufacturing, you may want to use progressive stamping, where a flat sheet of metal is shaped and cut, step by step, by hard metal dies driven by a hydraulic press. If you can design your part such that it can be made by simply pressing and cutting a flat sheet of metal into shape with this simple process, it can be made absurdly cheaply and efficiently.

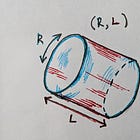

If you need something with far greater complexity than a simple flat sheet can provide, then there are a variety of other options – casting, lathes, CNC milling, 3D printing, and more. There also are many very special-purpose machines for making things like bolts, screws, wires, pipes, and more in bulk. A 3D printer may be able to make a part with an incredibly complex and intricate geometry, but it will generally be very slow, with limited choice of material, and often producing parts full of defects, voids, and weak spots. A lathe simply spins an object while moving a cutting head – great for making objects with a degree of rotational symmetry, though creative machinists have spent centuries finding ways to push this machine to do a surprising range of things.

And while you can hand a 3D printer any arbitrary 3D model to slice and print, there are quite a few things that you can’t print in practice. Objects with floating parts or overhangs can sometimes be salvaged with support structures or by reorienting the part, but for the most part are beyond the scope of what a 3D printer can do. Material choice is usually pretty limited, and most printers don’t support more than one material at a time.

We can look to sci-fi, there have for decades been dreams of atomic replicators and molecular assembly machines; effectively 3D printers assembling objects atom-by-atom. An alternate route was originally proposed by Robert Heinlein in his story Waldo (1942), where a scientist builds controllable mechanical arms, which then build smaller arms, which build even smaller arms, and so on until they reach down to the molecular scale. However, the machines from Waldo (often referred to as “waldoes”) run into problems; among other issues, physical processes work differently across scales and a design that works well at one scale may work very poorly at a different scale.

Atomic replicators, even if we could build them, still run into many of the same problems as 3D printers. Simply because you can specify every atom in an object with an atomic number and X,Y,Z coordinates does not mean that you can actually place atoms in that arrangement. You must factor in how the atoms interact with each other and the tool itself, you must factor in how the atoms move around once they’ve been placed, you must factor in whether or not a molecule is stable enough to hold itself together when it’s only been half-built. Any manufacturing process, no matter how exotic, has the constraint that the object it’s building must not only be stable as a final product, but also must be stable at every step throughout the entire process of forming it.

An atomic assembly process would also undoubtedly have limits in terms of what materials and temperature ranges it can work in. Whatever processes produced and shaped the parts of this machine can also unshape it, and may be outside the domain of what it can actually assemble. Can your extremely sensitive atomic assembly machine safely handle highly corrosive fluorine? Can it handle radiation? Can it print dry ice at -110 degrees? Can it print at 1500 degrees? Can it print a glass of water? Can it print a cell without the cell wall bursting like a bubble when only half-built?

I don’t think it’s very ridiculous to say that your 3D atomic assembly machine would not be able to print a nuclear fusion reactor, hundred-million-degree plasma and all. You could perhaps print the fusion reactor and pump in the plasma afterward, but plasma no matter how hot is just a cloud of atoms at specific positions with specific (albeit very high) velocities.

Of course, all of this completely sidesteps the question of how you would encode the design for atomically-precise objects. Whatever you manufacture would require either an atomically-precise hard drive many times larger than the object you’re attempting to print, or would require a substantial amount of data compression. Either way, some amount of computation will be required to read this data, decompress it if necessary, compute positions of atoms, plan and schedule the arrangement of atoms and assembly arms (or whatever mechanism actually does the assembly), and to put each atom in place. This computation will almost certainly have a thermodynamic cost, technically speaking the movement of the atoms would itself count as a type of computation whose thermodynamic footprint would need to be factored in, and so depending on the approach this kind of molecular assembly could also produce enormous amounts of waste heat and consume enormous amounts of energy, which would need to be managed (likely by means of even more energy) to prevent melting the object or the tool itself.

In short – there is no true “universal machine tool”, and even the craziest sci-fi dreams fall short.

Software Machine Tools

In computing, we can perhaps tell ourselves otherwise. Python is Turing-complete after all. In theory, we can build anything with it.

Matrix multiplication is close enough to Turing-complete, you can emulate any kind of if/else logic in it, and you can emulate any matrix math with enough if/else logic too. It might not be pretty, but it’s doable. A big enough matrix can emulate any amount of memory, and with enough layers you can emulate any arbitrarily long computation, and the inverses are equally true. There’s really no reason why, at least in principle, you couldn’t perfectly recreate all of Claude with conventional programming constructs – if/else, for/while, data structures, algorithms, arithmetic, strings, lookup tables, and so on. Doing arithmetic with actual arithmetic instructions would probably be more efficient, more understandable, and more correct than whatever crazy matrix math is emulating it.

The biggest barriers to building this is not the theoretical capabilities of the language, but rather a combination of the sheer volume of code that would need to be written and the lack of human understanding of how to write it. The whole reason why we use neural networks to solve these problems is simply that we have no idea how to build things like image classification and language models by more conventional means, and so we turn to a tool that can generate a program from a large enough volume of data.

We can consider the expressivity of a language as a matter of how many keystrokes, or perhaps how long of a source file, it takes to accomplish a task. There’s of course a difference between these two metrics – autocomplete can remove some repetitive structure in code, and any code will require much more iteration, modification, and debugging than simply writing out every line of code. Languages may vary in how expressive they are – small amounts of code may unfold into large amounts of machine code based on what language constructs exist. Languages may vary as well in how debuggable and understandable they are, though this is also a product of how the language is used. Manually writing out a table of neural network weights and running the matrix math will be more or less just as incomprehensible in C, Python, Rust, Javascript, Assembly, or APL.

There is a recreational programming activity called “code golf”, where programmers attempt to solve problems in the minimum number of characters possible. There are even dedicated languages for code golf, which do fun things like define an empty source file to print “hello world”. At the opposite extreme, there is a notion of a “Turing Tarpit”; a system which is technically Turing-complete, but that is hopelessly impractical and inefficient to use. Many cellular automata can be argued to fall into this category, for example.

Expressivity is also a matter of the skill of the user. Writing was invented many thousands of years ago – any words that can be dreamt up can be written down. Theoretically, the solutions to all the world’s problems are mere words away, if only they can be elucidated. The solutions to all the problems faced in the year 1,000,000 AD could have been pressed into clay tablets 5,000 years ago, if only someone had thought to do so. The tightest bottleneck of all, by a profoundly enormous margin, is not in the pen or the paper or the ink, or the stylus or the clay or the hand, but rather in the brain behind it all.

Humans engineer things based on what we understand. People have been trying to solve image classification problems for decades, and there is a vast array of “feature engineering” methods for hand-writing algorithms to recognizing shapes, objects, faces, and gestures. Then in 2011 a convolutional network called AlexNet was trained on a couple GPUs and began thoroughly obliterating all previous methods in benchmarks. There is some family of tricks that the neural network has learned to do that is more powerful than all the techniques we spent decades working on, and after 15 years we still don’t know what they all are. Of course, there are also many problems which AI tools have not yet delivered despite stubborn and persistent effort – the elusive “AGI” being the most obvious one.

The fact that a programming language is “Turing-complete” and “universal” means that in theory it can be used to build anything, but there is absolutely no obligation for this to have any truth in practice. If we want to solve problems that we do not know how to engineer with conventional programming constructs, it may take many orders of magnitude fewer keystrokes and decades of research to simply assemble a training set and pump it into a neural network than to implement everything by scratch.

Thus I propose the concept of a Software Machine Tool. There are more ways to build software than conventional programming languages, and neural networks are a clear example. The asymptotic capabilities may all be universal as we approach infinite effort and skill, but different tools can have vastly different capabilities in practice. No one has time to write a trillion lines of code to reimplement Claude or Sora, and training a neural network to correctly and efficiently replicate a browser or terminal or operating system is similarly insane.

The implication, of course, is that there are presumably many more that we have not yet discovered. Consider the immense value that programming languages have provided for the world in the nearly 70 years since FORTRAN was invented. Consider the immense impact being generated by AI now. Consider what would happen if we found a third class of software machine tools orthogonal in capabilities to either of these, and what that may unlock.

To some extent, the current wave of AI coding agents is arguably a third category of software machine tool, albeit one with aspects of the other two. Exactly what can be efficiently built is still very heavily constrained by the limitations of conventional programming languages, and the training set of code that has been written with them. There are undoubtedly many world-changing algorithms that have yet to be discovered that have not been added to this training set, and cannot be synthesized on-demand by an LLM. Asking an LLM to write out a table of embeddings with strange properties may be off the table, even if that table would be a relatively small amount of code. On the other hand, the sheer volume of code that can be produced, the iteration speed, and the expressiveness of natural language is a difference in quantity that can perhaps create at least a slight difference in kind.

There are other options as well. I actually was not someone who was terribly surprised by AI coding tools when they first appeared, as I had been keeping an eye on code synthesis research which had been going on for many years before. The format and scale was unexpected; I expected something smaller scale and more constraint-based; “generate code that provably satisfies this strange and difficult set of properties”. Done well, this kind of approach is still likely very viable and would be able to generate some very strange things that may be well outside the domain of LLM training sets. My guess is it’s more limited by the imagination of what kinds of useful problems can be productively framed in such a way.

Superoptimizers are another interesting approach; rather than writing tens of millions of lines of hand-crafted compiler optimization and analysis passes, simply write a relatively small tool for verifying program equivalence (in general undecidable, but there are decidable subsets of this that can cover the majority of real-world cases pretty easily, as well as ways of covering all cases with conservative-but-safe assumptions), and a search algorithm, such as MCMC or a genetic algorithm. This lets you frame code optimization as a matter of “find the fastest possible sequence of instructions I can pump into the CPU that is provably equivalent to this code.” The main thing that has held this back historically is that it’s “too slow” to be practical. However, it’s very amenable to caching (optimize your code overnight, pull from the cache during the day, only recent changes run slow), and the standard for “too much compute” from a few years ago is basically “less compute than Claude spends generating a single token”.

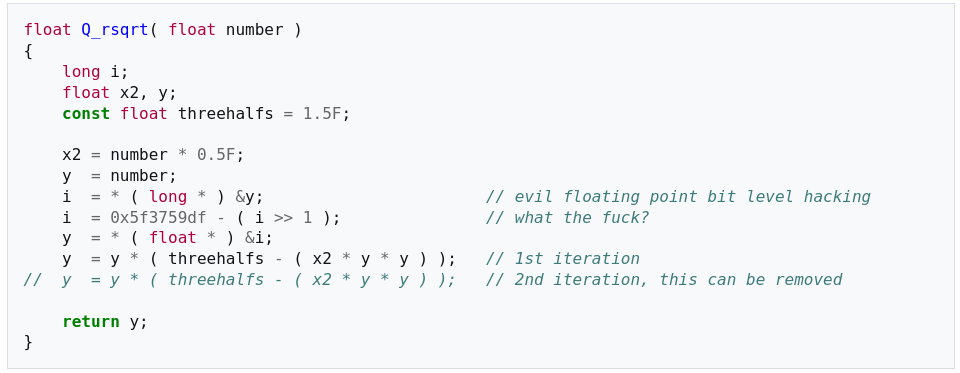

Superoptimizers also are notorious for generating code that is very fast; they can exploit tricks that no human would ever have imagined, that no human would have programmed into a compiler, but they also can generate code that no human can easily understand. Imagine something like the notorious fast inverse square root algorithm, but for every line of code.

If we’re considering general code synthesis, we could even generalize this. Perhaps you need code that has a particular set of properties, properties so difficult or unusual that you don’t even know how to write the code. As long as you have a tool that can verify whether or not a function has these properties, and perhaps define a gradient or error function to help navigate the search, a superoptimizer should be able to help. You wouldn’t be limited to just matrix multiplication like machine learning, you could have code with control flow, data structures, loops, etc.

There’s another very interesting option. Rather than focusing as much on the specific operations a function performs, why not instead put effort into the particular subspace of input/output pairs and how we map meaning onto them? This is fundamentally what neural networks operate on with embeddings of various kinds, and to a weaker extent it’s what we do with things like C-style enums and simple formats like ASCII. Another notable example to illustrate my broader point would be linear codes in error correction; if you simply choose the correct table of magic constants with special properties, you can solve difficult error-correction problems with very simple and efficient algorithms.

There are perhaps a lot more problems than we realize that can be addressed this way, but the question of how you generate the table of magic numbers, as well as the corresponding function that operates over them, is a difficult problem. We have some methods of addressing this with machine learning, but these neural networks are often very large and expensive, not the kind of thing that you can casually operate on in your code. Linear codes are extremely tiny and cheap. There’s a very large amount of unmapped territory in the middle.

More generally, there’s a sense in which RAM isn’t a very natural form of memory, at least compared to plain bit vectors inside of a circuit. If we look to more emerging and exotic forms of computing – neural networks, but also quantum and reversible computing – these often require memory to be a small vector of bits or variables that we apply gates to individually. We take RAM for granted in conventional computing, but it actually requires very large amounts of routing and addressing circuitry to implement. AI algorithms evidently have some way of representing hierarchically structured information even without pointers and random access as we would recognize it, instead just embedded in the matrix operations. If you just have a big vector of a few hundred or thousand bytes sitting in a CPU register, and you can apply some large, complex operations to it, what kinds of data structures and representations can you embed in that? And what kinds of unconventional properties might you be able to get out of it?

Code Analysis

While not strictly something for constructing code, there’s certainly a very large amount of room for better static analysis in programming languages. Modern optimizing compilers are full of extensive static analysis, and a great deal of this is directly relevant to the same kinds of properties that matter for the correctness of your code, there’s just no language-level interface for interacting with this at all, and the tools the compiler offers often are not really designed for casual use. Even something as stupidly basic as asking “what assembly does this function compile to?” is hard enough that there’s a popular web tool designed to do a task that should really be a standard CLI command in every serious compiler.

Debugging ultimately is the process of determining the difference between the code that you think that you wrote and the code that you actually wrote. This will only get dramatically more important as more code is written by AI. It’s hard to improve things that you can’t measure, and it’s not always easy to be aware that they even exist if you’ve never even seen them. Visibility into code today is pretty poor in general.

Further, a tremendous amount of innovation simply comes from viewing old problems from new perspectives. I’ve written before about how much geometric structure there is underneath the symbolic surface of code; if we can bring in tools to make this more obvious, and help people better understand this structure in their code, it may inspire thinking of new degrees of freedom to poke at. That may expand what people write with conventional code, but text is often a poor format for strictly geometric structure. There’s nothing stopping you from writing down a table of coordinates and shapes, but translating back and forth between shapes and coordinates in your head is surely difficult and error-prone. Many simple transformations would require precisely shifting things around, requiring rewriting all of the coordinates and constraints. It’s easier to just handle this with a graphical tool and automate it all.

I’ve been hard at work the past couple months on my chip startup, but some momentum is starting to build and interesting work is getting done.